I finally got the time to get some eclipse pictures together! Between increased duties at work, the long drive back from Tennessee, and hurricane Harvey, I have been a wee bit busy, sorry!

Almost a year ago my wife and I decided we were going to go see the total solar eclipse 2017. Looking at a list of total solar eclipses, this was the only one until 2024. So we decided to go. For us, the best place to see the eclipse was in Tennessee so that is where we decided to go.

Early on Saturday 19th my wife and I loaded up Buster (my MINI Countryman) and headed up to Memphis. We spend the night there and had a little fun on Beale street. Since it was my wife’s birthday and she was being nice enough to let me head to the eclipse, I figured she should have a little fun, and we did! We did make sure to call it a night early enough so that we wouldn’t have an issue driving up closer to the central line the next morning however.

Sunday the 20th we ate lunch at the Hard Rock on Beale street (Hard Rocks are kinda our little tourist thing) and then headed for Paris, TN. This was our base camp as it allowed me to keep an eye on the weather and had easy access north, west or south to wherever the skies were clear. I could not afford to miss this so I was making sure.

I made plans for three locations, one primary, one further south and one further north. A good portion of the evening was switching between weather apps seeing who said what. All the while I had the weather channel on the TV going. Yeah, I was a little over the top.

After figuring out what time I would have to get up to make the worst case destination in my plans, we went out and had a nice little dinner, then headed to bed.

The next morning the weather was awesome so we headed to the Eclipse Event in Clarksville, TN at the Old Glory Distillery which was right in the middle of the total solar eclipse 2017 path of totality. The people there were awesome and their spirits were awesome too! Unfortunately their stuff is only available in Tennessee so that may mean we have to go back, heh.

All over Clarksville there were vendors on the side of the road selling everything from water to t-shirts. There was a really great turnout which I think is fantastic. Some people live their whole lives and never experience one of these so it was great to see so many people, especially children, coming out to watch it.

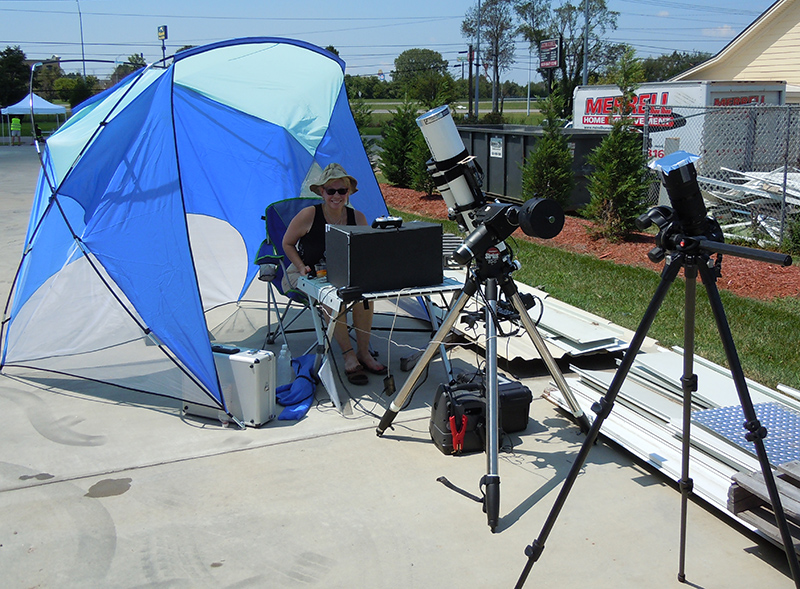

I set up my 110mm Orion refractor I use for imaging on my Orion Sirius mount, installed my Thousand Oaks glass filter and then fired up the laptop. Next to this setup I put my old Nikon D90 DSLR on a tripod with a 200mm 2.8 ED lens and a 1.4 teleconverter using Baader Solar Film. I also had a video camera using Baader film mounted below the refractor.

I set up my 110mm Orion refractor I use for imaging on my Orion Sirius mount, installed my Thousand Oaks glass filter and then fired up the laptop. Next to this setup I put my old Nikon D90 DSLR on a tripod with a 200mm 2.8 ED lens and a 1.4 teleconverter using Baader Solar Film. I also had a video camera using Baader film mounted below the refractor.

It was hot in the parking lot so I put up a little shade tent and let my wife sit under it watching the laptop while I stayed out in the sun making sure everything went well, it didn’t. It took forever to get the mount to line up on the sun, then it wouldn’t track, then a cable came unplugged. It was a nightmare. At just a couple minutes to first contact I told my wife I did not think I would be ready in time. I was fortunately wrong.

It was hot in the parking lot so I put up a little shade tent and let my wife sit under it watching the laptop while I stayed out in the sun making sure everything went well, it didn’t. It took forever to get the mount to line up on the sun, then it wouldn’t track, then a cable came unplugged. It was a nightmare. At just a couple minutes to first contact I told my wife I did not think I would be ready in time. I was fortunately wrong.

I also tried to take a total solar eclipse video but had a problem with the video camera so there is no video unfortunately.

Yes, I tested everything well in advance to the event, but even so, things can still go wrong. I am just thankful that the only real disappointment was that the video was completely useless, both cameras got plenty of great images.

I did decide that my old method of using the shadow behind the telescope to line it up on the sun was a terrible idea. I have since acquired a TeleVue Sol Searcher and will never go back. This thing makes it so very simple to get any telescope lined up in seconds.

Let’s take a look at some images!

The first one is a compilation of images as the sun changed, always wanted one of these images. I did make one of the last eclipse I imaged which was an annular eclipse. While not quite as amazing as a full eclipse, the annular was still incredible and the ring of fire it shows when it reaches annularity is really nice.

Next we have the money maker, total eclipse. The rays coming out are simply astounding.

And here is one of the chromosphere:

This is an enlargement from the D90 which is far inferior to the ones taken through the telescope with the D7000, however you can clearly see the red chromosphere showing up as pink here. This only happens for a few seconds so the fact that I captured them is pretty cool.

This is an enlargement from the D90 which is far inferior to the ones taken through the telescope with the D7000, however you can clearly see the red chromosphere showing up as pink here. This only happens for a few seconds so the fact that I captured them is pretty cool.

One note I want to make is that when you get the chance to see one of these, do not pass it up. Being there and looking around is simply amazing. No other time in my life have I seen light like that, dark but very edgy with multiple shadows because of the way the sunlight shines around the moon. It is nothing like you think, it is way cooler.

Lastly is this image:

After the eclipse was over it was time to go inside and buy some of their limited edition Solar Shine, made just for the eclipse. It was all a blast!

After the eclipse was over it was time to go inside and buy some of their limited edition Solar Shine, made just for the eclipse. It was all a blast!

Driving back to our hotel virtually all of the vendors on the side of the roads were gone which I found odd as there were a ton of people on the roads heading back to wherever they were staying for the night. Seems to me that would have been an excellent time to put everything on discount and sell it rather than tote it back with you.

I know Sue Ann and I brought more back than we took 😉

I would really like to thank all the people at Old Glory, they were amazing! Also thanks to all the people who bought my book How to Take Pictures of an Eclipse. Hope to see you all at the next eclipse in 2023 (Annular) and 2024 (Total here in Texas, yeah!).

I hope you enjoyed reading about our eclipse journey!

Share this post!